A 4-week test of messaging strategy, user behaviour, and measurement accuracy

Over the past four weeks, I ran a simple but deliberate experiment.

Each week, I published the same type of content on Facebook — a link to a long-form article — but changed one thing: the hook.

Not the topic. Not the audience. Not the format.

Just the opening line.

The goal was to understand something practical. Not what people say works, but what actually drives attention, clicks, and engagement when applied consistently over time.

Most advice around social media focuses on volume or tactics. Post more. Be consistent. Follow trends. What’s often missing is clarity around why something works, particularly when targeting a specific audience.

So instead of guessing, I tested four distinct hook styles over a four-week period:

- Calm framing

- Stronger framing (sharper hooks)

- Story-driven (personal credibility)

- Deliberately uncomfortable (conviction-led)

The intention wasn’t to find a universal answer. It was to understand how different types of messaging influence behaviour when the audience and content remain consistent.

The Hypothesis

Before starting, the assumption was relatively straightforward.

Stronger, sharper hooks would generate the highest click-through rates. They create tension quickly, interrupt scrolling behaviour, and demand attention. This is consistent with most modern advice around content distribution.

At the same time, there was a secondary hypothesis.

Calmer, more measured framing might result in fewer clicks, but higher-quality engagement once users reached the article. In other words, less noise, but more alignment.

The story-driven and uncomfortable hooks sat somewhere in between. One aimed to build trust through relatability. The other aimed to provoke a reaction strong enough to drive action.

How the Experiment Was Structured

Each week followed the same structure.

A single blog post was selected and shared across Facebook using multiple variations of the same hook style. The article itself did not change. The audience remained consistent. The only variable was the framing of the opening line.

This allowed for a cleaner comparison. Rather than testing different topics or formats, the experiment isolated the role of the hook in driving behaviour.

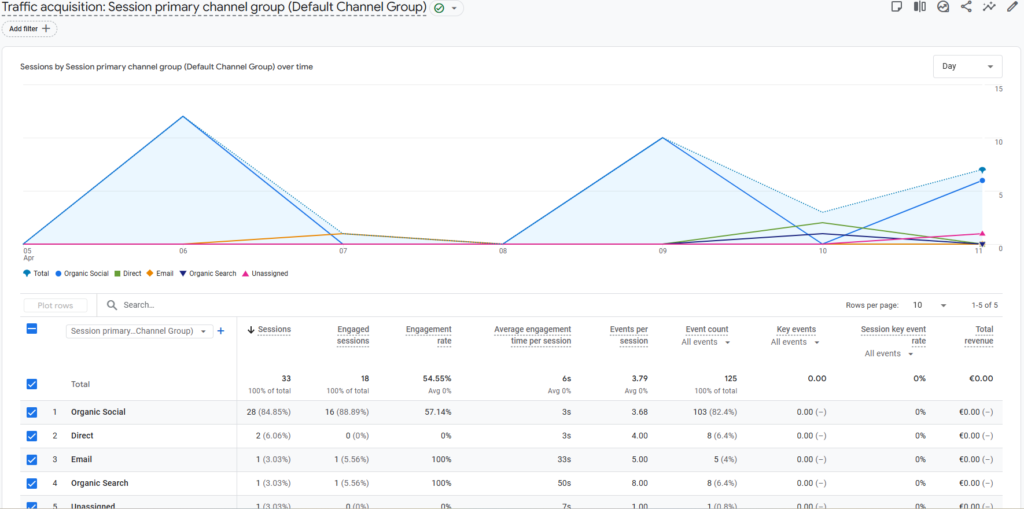

Performance was measured using:

- Sessions (traffic volume)

- Engagement rate

- Events per session

- Average engagement time

The aim was not just to measure clicks, but to understand what happened after the click.

What Happened (The Reality)

Across the four weeks, a few patterns became clear.

First, hooks matter — but not always in the way expected.

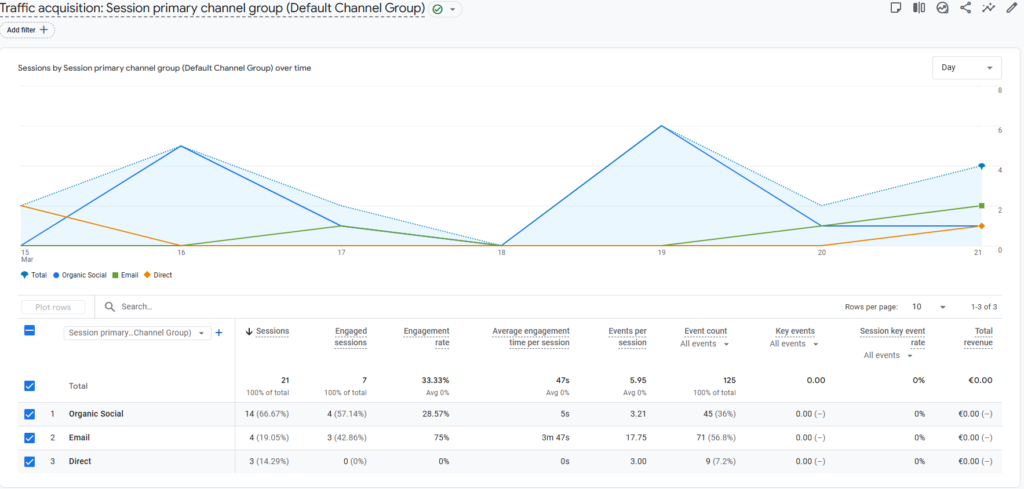

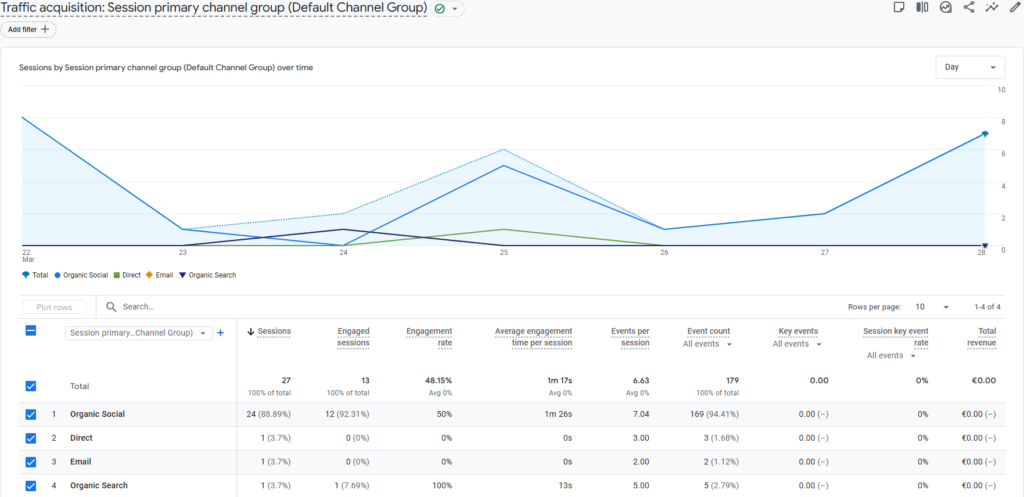

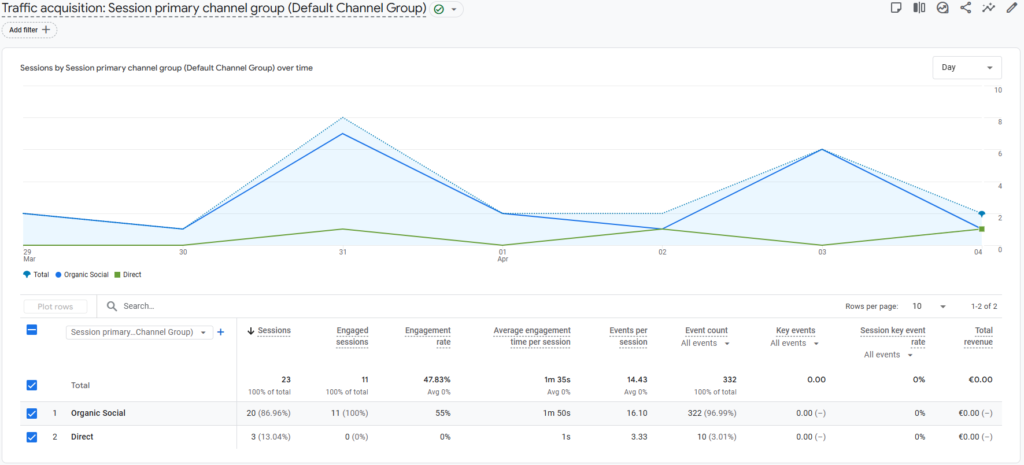

Weeks 2 and 3 produced the most reliable and meaningful engagement. Week 4 initially appeared strongest based on engagement rate, but inconsistent engagement time data suggests a measurement issue rather than a clear performance improvement.

The Data (And One Important Issue)

On the surface, the data showed strong engagement signals.

- Organic social drove the majority of traffic

- Engagement rates were close to target benchmarks

- Events per session were high

However, one metric stood out as inconsistent: average engagement time.

In several cases, engagement time appeared unrealistically low despite high interaction levels. This suggests a measurement issue rather than a behavioural one, likely related to how events were firing within GA4.

This became an unexpected but valuable outcome of the experiment.

It highlighted that behavioural data is only as useful as the way it’s measured. Before drawing conclusions, tracking accuracy needs to be reliable.

From a strategic perspective, this is just as important as the hook performance itself.

What This Experiment Actually Showed

The biggest takeaway isn’t that one hook style “wins.”

It’s that alignment matters more than intensity.

A strong hook can generate attention, but if it creates the wrong expectation, engagement suffers. A calmer hook may attract fewer clicks, but those clicks are often more relevant.

In other words:

- Attention can be engineered

- Engagement has to be earned

This is where most content strategies fall short. They optimise for the first and ignore the second.

What Changed Going Forward

This experiment has already shifted how I approach content distribution.

Hooks are no longer just about grabbing attention. They are about setting the right expectation for the experience that follows.

The next phase will focus on two areas:

- Measurement clarity

Fixing engagement tracking to ensure behavioural data reflects reality - Deeper alignment testing

Refining how closely hooks match the tone and structure of the article itself

Rather than asking “what gets clicks,” the better question becomes:

What brings the right person into the article in the right frame of mind?

Final Thought

This wasn’t a large-scale experiment.

It was four weeks, one variable, and a defined audience.

But it was enough to show something useful.

Marketing doesn’t fail because people don’t see your content. It fails because the expectation you create doesn’t match the experience you deliver.

The hook is the first promise you make.

What matters is whether you keep it.

Here’s to progress. (and fewer 404s)

Chris

| Hook Type | CTR (Sessions) | Engagement | Verdict |

| Calm | Medium | Low | Lowest |

| Stronger | High | High | Strongest |

| Story | Medium | High | Strong |

| Conviction* | High | Low – incorrect data | Potential – Need New Data |

* Conviction hooks may drive clicks, but without reliable measurement, they risk overestimating true engagement.

Hook Strategy – Framework

1. Attention is easy to create

Stronger hooks increase clicks, but not necessarily engagement.

2. Alignment matters more than intensity

The closer the hook matches the content, the better the outcome.

3. Expectation shapes behaviour

The hook determines how the content is experienced.